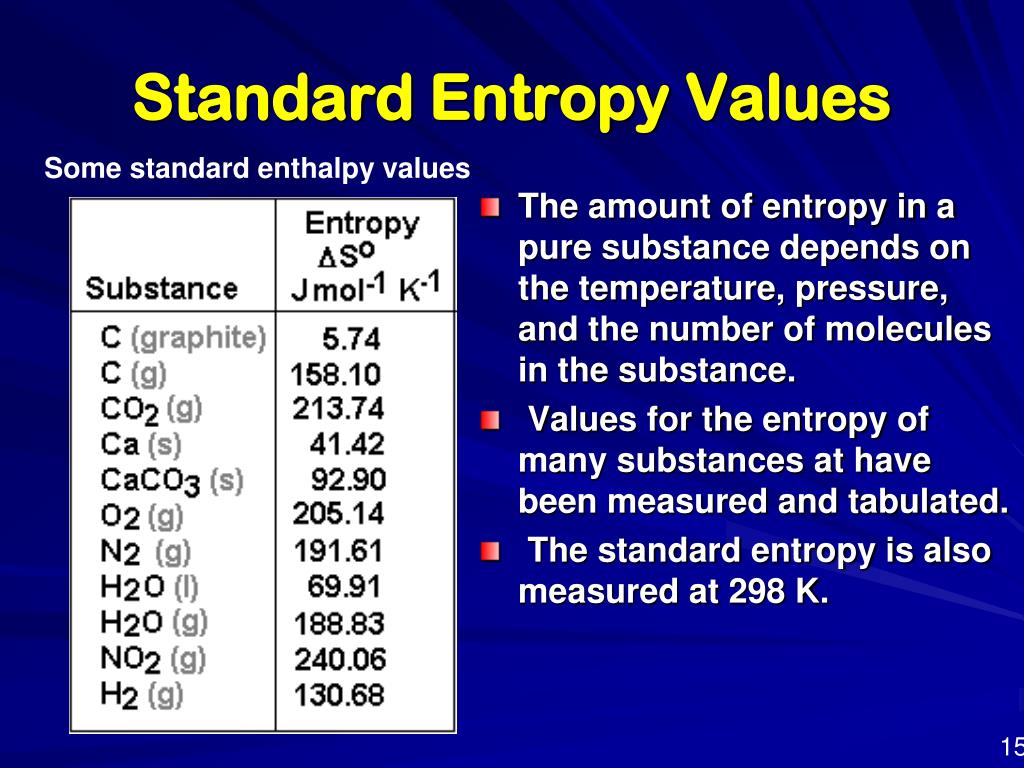

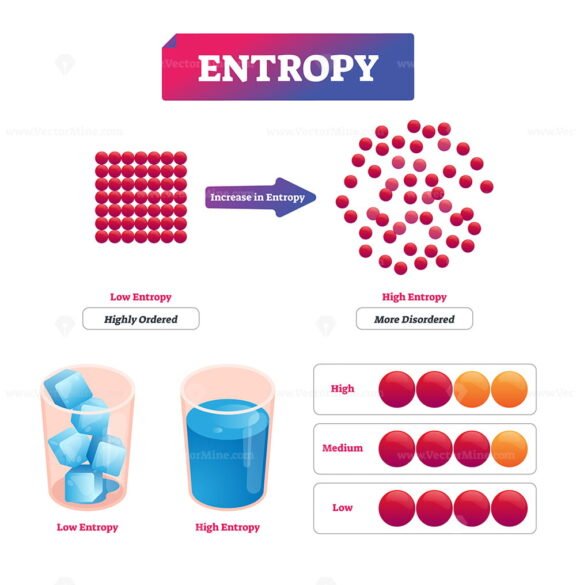

This point is particularly relevant in the context of entropy, a concept that is often misinterpreted, and where the name and number variant methods may be difficult to follow. Furthermore, software packages often serve as entry points for researchers unfamiliar with a subject to develop an understanding of the most commonly used methods and how they are applied. Without access to these software tools, researchers lacking computer programming literacy may resort to borrowing algorithms from unverified sources which could be vulnerable to coding errors. Open-source software plays a critical role in tackling the replication crisis in science by providing validated algorithmic tools that are available to all researchers. Īs the popularity of entropy spreads beyond the field of mathematics to subjects ranging from neurophysiology to finance, there is an emerging demand for software packages with which to perform entropic time series analysis. Furthermore, new entropy variants are being identified which quantify the variability of time series data in specific applications, including assessments of cardiac disease from electrocardiograms, and examinations of machine failure from vibration signals. This universe of entropies continues to expand as more and more methods are derived with improved statistical properties over their precursors, such as robustness to short signal lengths, resilience to noise, insensitivity to amplitude fluctuations.

Numerous variants have since been derived from conditional entropy, and to a lesser extent Shannon’s entropy, to estimate the information content of time series data across various scientific domains, resulting in what has recently been termed “the entropy universe”. Where y may represent states of a separate system or previous states of the same system. An extension of Shannon’s entropy, conditional entropy (2) measures the information gained about a process ( X) conditional on prior information given by a process Y, Where H( X) is the entropy ( H) of a sequence ( X) given the probabilities ( p) of states ( x i). This is the principle behind Shannon’s formulation of entropy (1) which quantifies uncertainty as it pertains to random processes :

Through the lens of probability, information and uncertainty can be viewed as conversely related-the more uncertainty there is, the more information we gain by removing that uncertainty. The goal of EntropyHub is to integrate the many established entropy methods into one complete resource, providing tools that make advanced entropic time series analysis straightforward and reproducible. Instructions for installation, descriptions of function syntax, and examples of use are fully detailed in the supporting documentation, available on the EntropyHub website– Compatible with Windows, Mac and Linux operating systems, EntropyHub is hosted on GitHub, as well as the native package repository for MATLAB, Python and Julia, respectively.

EntropyHub (version 0.1) provides an extensive range of more than forty functions for estimating cross-, multiscale, multiscale cross-, and bidimensional entropy, each including a number of keyword arguments that allows the user to specify multiple parameters in the entropy calculation. In light of this, this paper introduces EntropyHub, an open-source toolkit for performing entropic time series analysis in MATLAB, Python and Julia. To date, packages for performing entropy analysis are often run using graphical user interfaces, lack the necessary supporting documentation, or do not include functions for more advanced entropy methods, such as cross-entropy, multiscale cross-entropy or bidimensional entropy. Despite the growing interest in entropic time series and image analysis, there is a shortage of validated, open-source software tools that enable researchers to apply these methods. Entropy, as it relates to information theory and dynamical systems theory, can be estimated in many ways, with newly developed methods being continuously introduced in the scientific literature. An increasing number of studies across many research fields from biomedical engineering to finance are employing measures of entropy to quantify the regularity, variability or randomness of time series and image data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed